Jerry Jones gives mixed signals on Cowboys’ trade deadline approach

FOX Super 6 NFL contest: Chris ‘The Bear’ Fallica’s Week 8 picks

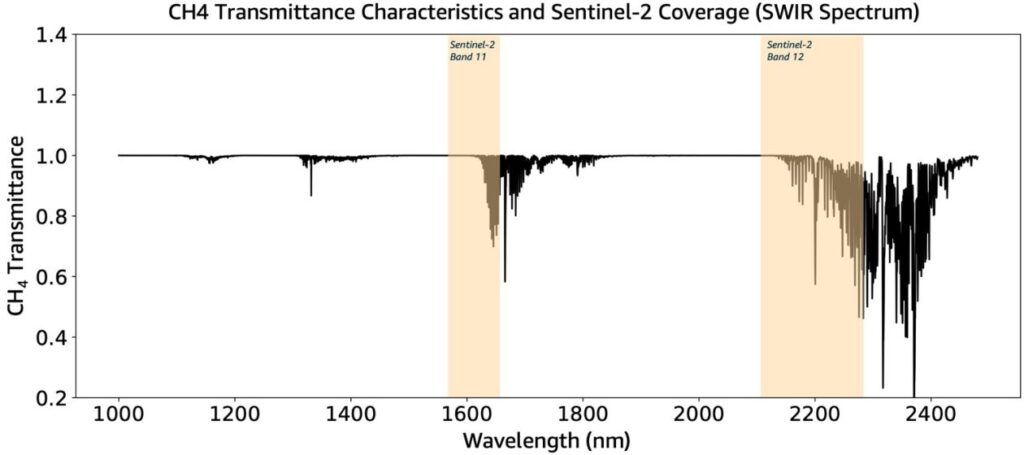

Detection and high-frequency monitoring of methane emission point sources using Amazon SageMaker geospatial capabilities

Lincoln Riley says he’s been ill with pneumonia, hopes to be back for USC-Cal

Taylor Swift Releases Rerecorded Album ‘1989 (Taylor’s Version)’: See the Bonus Tracks!

Michigan sign-stealing scandal could have been avoided with in-game technology

Clippers reportedly pausing James Harden trade talks ‘for foreseeable future’

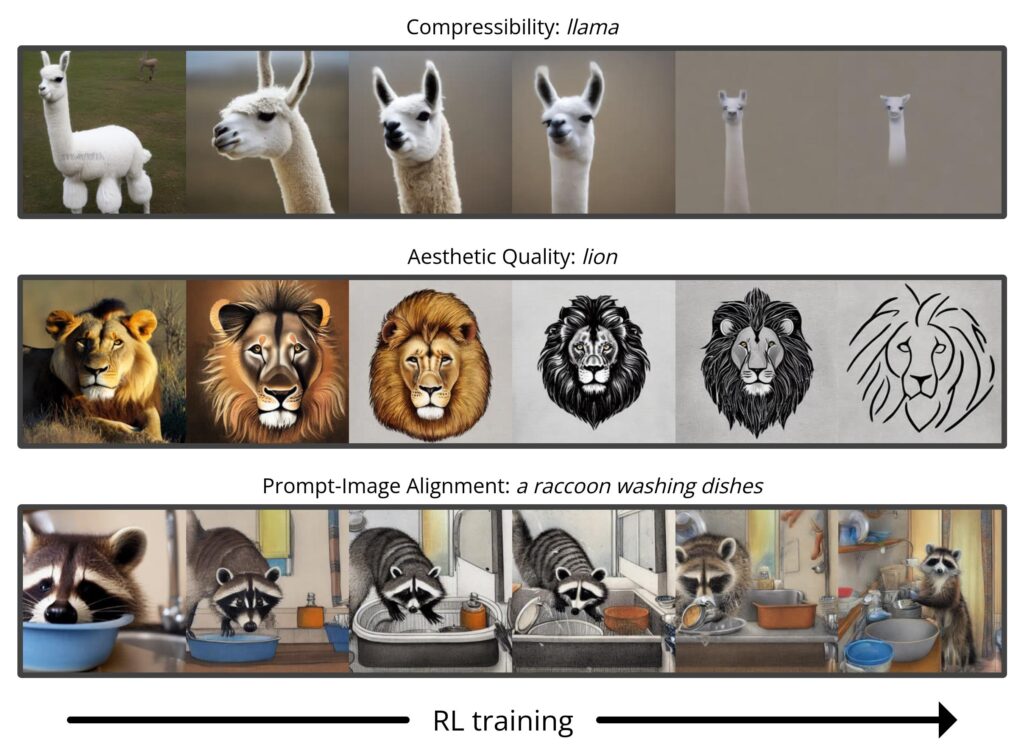

Training Diffusion Models with Reinforcement Learning – The Berkeley Artificial Intelligence Research Blog

Diffusion models have recently emerged as the de facto standard for generating complex, high-dimensional outputs. You may know them for their ability to produce stunning AI art and hyper-realistic synthetic images, but they have also found success in other applications such as drug design and continuous control. The key idea behind diffusion models is to iteratively transform random noise into a sample, such as an image or protein structure. This is typically motivated as a maximum likelihood estimation problem, where the model is trained to generate samples that match the training data as closely as possible.

However, most use cases of diffusion models are not directly concerned with matching the training data, but instead with a downstream objective. We don’t just want an image that looks like existing images, but one that has a specific type of appearance; we don’t just want a drug molecule that is physically plausible, but one that is as effective as possible. In this post, we show how diffusion models can be trained on these downstream objectives directly using reinforcement learning (RL). To do this, we finetune Stable Diffusion on a variety of objectives, including image compressibility, human-perceived aesthetic quality, and prompt-image alignment. The last of these objectives uses feedback from a large vision-language model to improve the model’s performance on unusual prompts, demonstrating how powerful AI models can be used to improve each other without any humans in the loop.