Personalize your search results with Amazon Personalize and Amazon OpenSearch Service integration

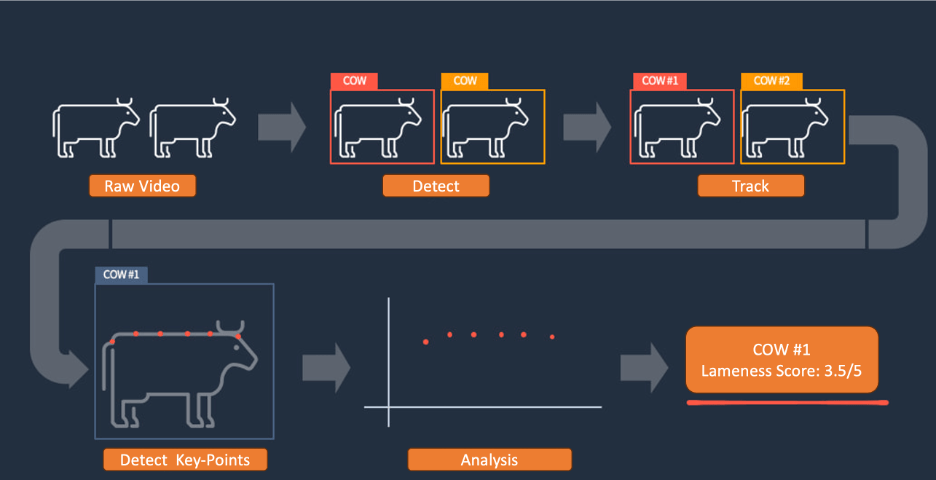

Keeping an eye on your cattle using AI technology

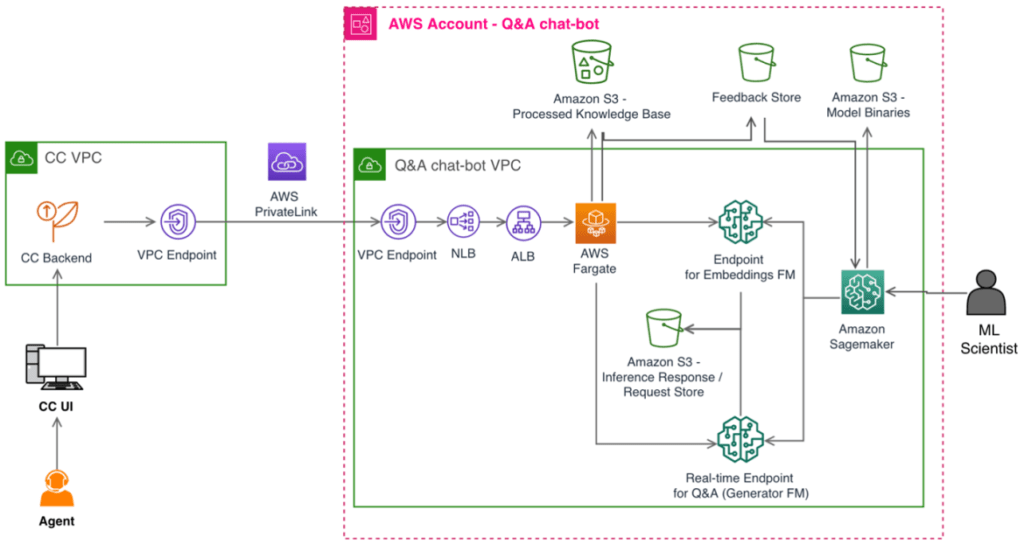

Learn how Amazon Pharmacy created their LLM-based chat-bot using Amazon SageMaker

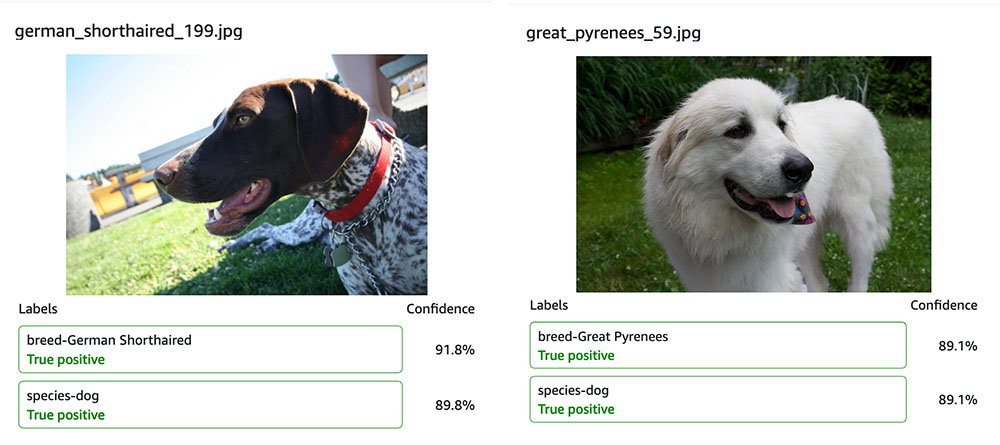

Optimize pet profiles for Purina’s Petfinder application using Amazon Rekognition Custom Labels and AWS Step Functions

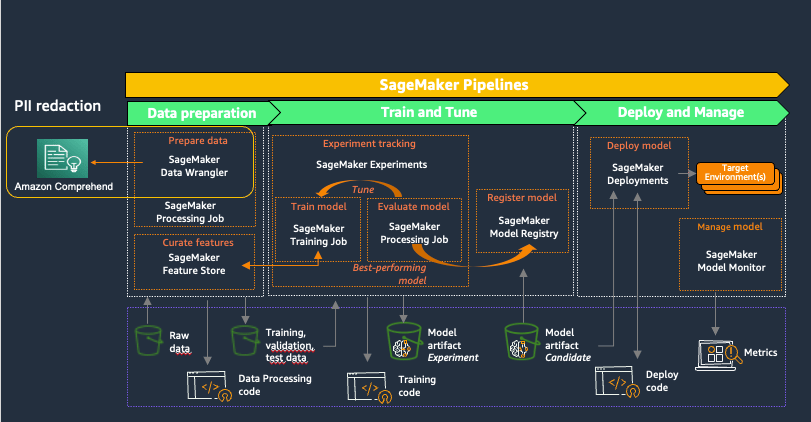

Automatically redact PII for machine learning using Amazon SageMaker Data Wrangler

Defect detection in high-resolution imagery using two-stage Amazon Rekognition Custom Labels models

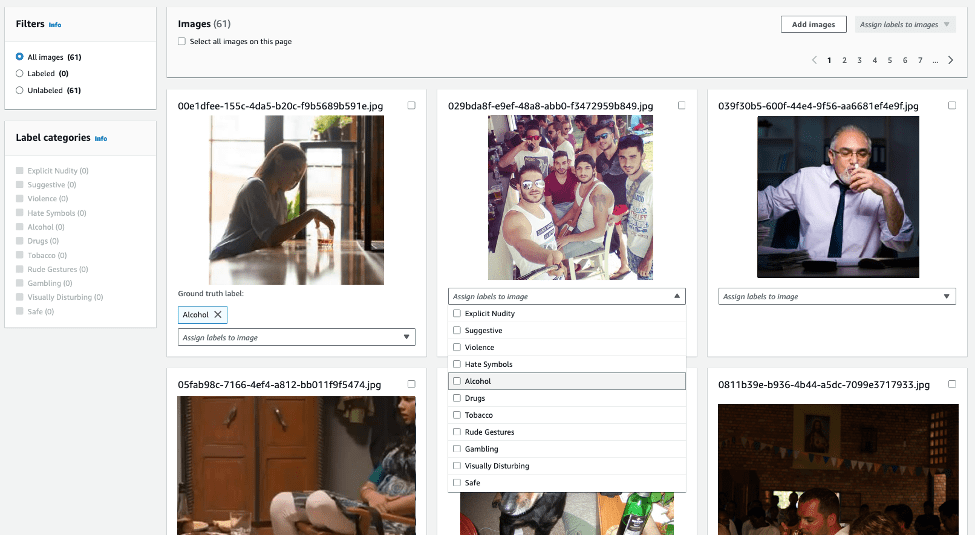

Announcing Rekogniton Custom Moderation: Enhance accuracy of pre-trained Rekognition moderation models with your data

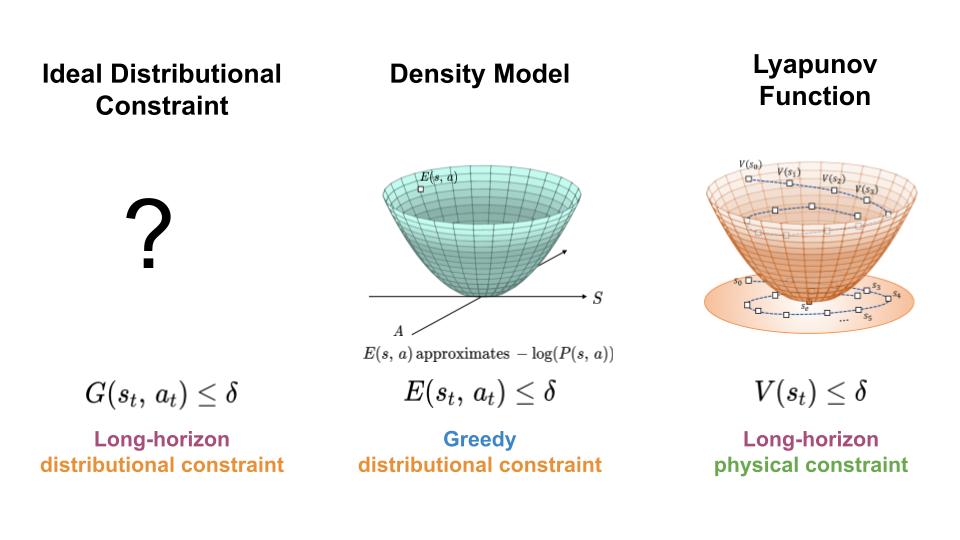

Keeping Learning-Based Control Safe by Regulating Distributional Shift – The Berkeley Artificial Intelligence Research Blog

To regulate the distribution shift experience by learning-based controllers, we seek a mechanism for constraining the agent to regions of high data density throughout its trajectory (left). Here, we present an approach which achieves this goal by combining features of density models (middle) and Lyapunov functions (right).

In order to make use of machine learning and reinforcement learning in controlling real world systems, we must design algorithms which not only achieve good performance, but also interact with the system in a safe and reliable manner. Most prior work on safety-critical control focuses on maintaining the safety of the physical system, e.g. avoiding falling over for legged robots, or colliding into obstacles for autonomous vehicles. However, for learning-based controllers, there is another source of safety concern: because machine learning models are only optimized to output correct predictions on the training data, they are prone to outputting erroneous predictions when evaluated on out-of-distribution inputs. Thus, if an agent visits a state or takes an action that is very different from those in the training data, a learning-enabled controller may “exploit” the inaccuracies in its learned component and output actions that are suboptimal or even dangerous.